Decision Tree

Definition: A Decision Tree \(DT\) is a set of rules \(R = \{r_1, r_2, \dots, r_n\}\) that are exhaustive and mutually exclusive. Each rule \(r\) is defined by a pair \((C, v)\), where \(C\) is the set of split conditions and \(v\) is the resulting class.

CPN Module \(s_{DT}\)

The Decision Tree is the atomic leaf module of the hierarchy — it contains no substitution transitions. Its definition is:

with:

\(PT(P_{in}) = In\) — the input feature vector enters through this port.

\(PT(P_{out}) = Out\) — the classification result exits through this port.

\(T_{sub} = \emptyset\) — no substitution transitions; all transitions are regular rule transitions.

Mapping \(\Psi(DT \to s_{DT})\):

Each rule \(r_i = (C_i, v_i)\) is converted into a guarded transition \(t_i\):

Input arc: \((P_{in}, t_i)\) consumes the feature vector token \(x\).

Guard: \(G(t_i) = \bigwedge_{j \in C_i} (x_{feat_j} \text{ op } \theta_j)\), the conjunction of all split conditions along the path from root to leaf.

Output arc: \((t_i, P_{out})\) produces the class label \(v_i\).

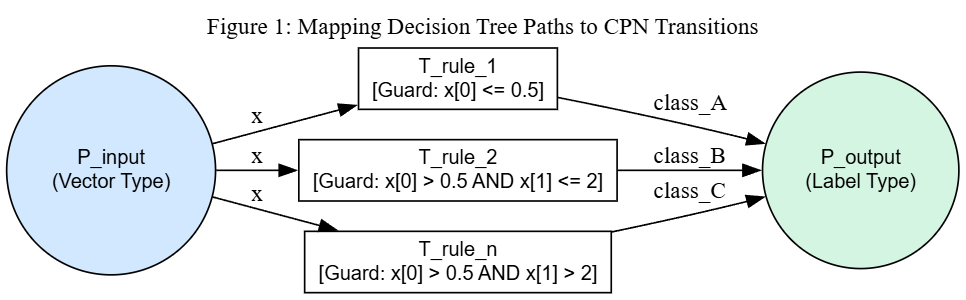

The figure below shows the CPN structure for a Decision Tree module. The input port place \(P_{input}\) (colour type \(\text{VEC}\)) holds the feature vector token \(x\), which is available to all transitions via their input arcs. Each transition \(T\_rule\_i\) has a guard composed of the conjunction of split predicates along its tree path. Since the guards are derived from complementary <= / > splits at every internal node, exactly one transition is enabled for any complete input, consuming \(x\) and depositing the class label in \(P_{output}\).

CPN module \(s_{DT}\): all leaf paths of a Decision Tree become guarded transitions between port places \(P_{input}\) (In) and \(P_{output}\) (Out).

Invariance Property: Due to the mutually exclusive nature of the rules in a DT, for any initial marking \(M_0\) containing an input token \(x \in \mathbb{R}^d\), exactly one transition \(t \in T\) will be enabled, guaranteeing total determinism within the module.

Note

This property holds under the assumption that the input vector \(x\) contains a value for every feature used by the tree. If any feature is missing, no transition may be enabled and the net falls back to the classifier’s default class.

Numerical Induction Example

The following example demonstrates that the CPN module construction preserves the determinism invariant for a tree with \(n\) rules and remains valid when extended to \(n+1\) rules.

Setup: Consider a 2-dimensional feature space \((x_1, x_2)\) with classes \(\{A, B, C\}\).

Base case \((n = 2)\):

A minimal Decision Tree with a single split on \(x_1\) at threshold \(\theta = 5.0\):

Rule |

Guard |

Class |

|---|---|---|

\(r_1\) |

\(x_1 \leq 5.0\) |

\(A\) |

\(r_2\) |

\(x_1 > 5.0\) |

\(B\) |

The CPN module is:

with \(T = \{t_1, t_2\}\), \(G(t_1) = [x_1 \leq 5.0]\), \(G(t_2) = [x_1 > 5.0]\).

Verification with input \(x = (3.0, \; 7.0)\):

\(G(t_1) = [3.0 \leq 5.0] = \text{true}\) — enabled, produces \(A\).

\(G(t_2) = [3.0 > 5.0] = \text{false}\) — disabled.

Exactly one transition fires. \(\checkmark\)

Verification with input \(x = (8.0, \; 2.0)\):

\(G(t_1) = [8.0 \leq 5.0] = \text{false}\) — disabled.

\(G(t_2) = [8.0 > 5.0] = \text{true}\) — enabled, produces \(B\).

Exactly one transition fires. \(\checkmark\)

Inductive step \((n = 2 \to n + 1 = 3)\):

The tree grows by splitting the leaf \(r_2\) (guard \(x_1 > 5.0\)) on feature \(x_2\) at threshold \(\theta = 4.0\). This replaces \(r_2\) with two new rules, each inheriting the parent guard:

Rule |

Guard |

Class |

|---|---|---|

\(r_1\) |

\(x_1 \leq 5.0\) |

\(A\) |

\(r_2'\) |

\(x_1 > 5.0 \;\wedge\; x_2 \leq 4.0\) |

\(B\) |

\(r_3\) |

\(x_1 > 5.0 \;\wedge\; x_2 > 4.0\) |

\(C\) |

The updated CPN module is:

with \(T = \{t_1, t_2', t_3\}\).

Why the invariant is preserved: The split replaces one leaf with two children connected by complementary predicates (\(\leq\) / \(>\)). The original guard \(G(t_2)\) is partitioned into two strictly narrower, non-overlapping regions:

where \(\dot\cup\) denotes disjoint union. Since \(G(t_1)\) was already disjoint from \(G(t_2)\) by the inductive hypothesis, it remains disjoint from both \(G(t_2')\) and \(G(t_3)\). Therefore the guards \(\{G(t_1), G(t_2'), G(t_3)\}\) form a partition of the input space.

Verification with input \(x = (8.0, \; 2.0)\):

\(G(t_1) = [8.0 \leq 5.0] = \text{false}\) — disabled.

\(G(t_2') = [8.0 > 5.0] \wedge [2.0 \leq 4.0] = \text{true} \wedge \text{true} = \text{true}\) — enabled, produces \(B\).

\(G(t_3) = [8.0 > 5.0] \wedge [2.0 > 4.0] = \text{true} \wedge \text{false} = \text{false}\) — disabled.

Exactly one transition fires. \(\checkmark\)

Verification with input \(x = (8.0, \; 6.0)\):

\(G(t_1) = [8.0 \leq 5.0] = \text{false}\) — disabled.

\(G(t_2') = [8.0 > 5.0] \wedge [6.0 \leq 4.0] = \text{true} \wedge \text{false} = \text{false}\) — disabled.

\(G(t_3) = [8.0 > 5.0] \wedge [6.0 > 4.0] = \text{true} \wedge \text{true} = \text{true}\) — enabled, produces \(C\).

Exactly one transition fires. \(\checkmark\)

Conclusion: By induction on the number of leaves, every binary split preserves the partition property of the guard set. Therefore, for any Decision Tree with \(n\) rules, the CPN module \(s_{DT}^{(n)}\) guarantees that exactly one transition is enabled for every valid input \(x \in \mathbb{R}^d\).